Part II

Though I officially started 0xmusic sometime in 2021, I realized that the seed it grew out of had been planted during some particularly frustrating middle-school piano lessons.

During those lessons, my piano teacher would insist that I should play the notes exactly as I saw them on the staff, and I’d often fake it and play by ear. Occasionally I’d get caught, and I would feel the wrath of a wooden ruler snapping at my fingers. I tried really hard to read the notes as I saw them, but the truth was I found the musical notation far too cumbersome. Instead of feeling the joy I felt singing harmonies to Beatles songs with my cousins during summers at our grandmother’s house, I felt the all-too-familiar dread and apathy evoked by my math tuitions.

As I outgrew ornery piano tutors and progressed to playing pop favorites on stage, I started to suspect that my annoyance with this notation was a symptom of the notation’s deficiency, not my own.

Some of the big problems I’ve always had with traditional musical notation are:

Mental gymnastics as a barrier to reading & playing music: a pretty bad separation of concerns at best.

Hard to parse out inherent structures and recursions within pieces: I’d like to be able to look at the sheet music for a piece and “get a sense” of its structure & feel. It’s pretty hard to do this with conventional sheet music notation.

Horribly verbose: A contributing factor to the above issue, there’s so much copy-and-paste. This is exacerbated by popular music’s steadily growing tendency toward increased repetition.

Entirely static: The idea of a piece “changing over time” is incomprehensible in such a rigid framework.

To be clear, I do believe that conventional musical notation served its purpose in an era when transcribing music was understandably hard. The fact that Brahms’ Symphony №4 can be played by an orchestra of musicians who’ve only seen the score a couple of times, is a testament to how accurate a language it actually is. I actually use it quite a bit now while communicating ideas with session musicians.

All I’m saying is that sometimes when you have a hammer, everything looks like a nail.

Generative Music is the Solution

As a software engineer, it occurred to me that we have tools to solve adjacent problems. Programming paradigms like imperative programming and procedural programming have structures like loops and functions that can help alleviate the problems relating to readability and verbosity in general. Additionally, functional programming elevates some of these ideas to a mathematical art form, using lambda calculus and recursions to succinctly represent complex transformations. This is apparent in languages like Haskell and Scala.

So Why is Today’s Generative Music NGMI?

It’s actually very simple: generative music today just isn’t that good.

Several prospective generative music artists have run with this framing of the problem, yet there haven’t been significant breakthroughs in pop culture that we can easily point out. The biggest “victory” in this space is still Brian Eno, even after decades.

Within the NFT space, there are a few artists who are cited for making “technological innovations’’ with generative music. Though this art has garnered a handful of ardent supporters, it just hasn’t penetrated mainstream culture. Traditional art — at least when it comes to music — still seems to be winning the day.

Exploring New Space

A well-coded generative music algorithm in theory also has another leg up over traditional musical composition: reducing human bias. As artists, our natural inclination is to draw from our existing vocabulary to represent certain emotions. Usually, this manifests in ways such as relying on certain “go-to” chords, harmonies, transitions, etc. to convey tension and resolution. Unfortunately, this can result in a lot of repetition of ideas. Think about a band where every song sounds basically the same (looking at you, Oasis). Generative music (at least in theory) is not saddled with these same sorts of biases. I’ve routinely been surprised by the output of some of the genres coded in 0xmusic — and I’m the one who wrote the original code!

One of the big ideas here is pivoting from the idea of musical composition toward functional composition: creating layers of transitions that can be composed by an underlying rule engine. Composition now becomes a game of understanding how implementation details of that rule engine help forward the larger story you’re trying to narrate as an artist.

To make some of these ideas feel more concrete, let’s look at a breakdown of a hypothetical song arrangement of 0xmusic.

Song Structure & Arrangement

Building a melodic framework

Let’s look at this hypothetical construction.

Legend — the numbers in this graph are based on 12-tone equal temperament, from which one could derive the Scientific Pitch Notation by simply adding an offset.

Here we have a directed acyclic graph to represent a family of passages in the Major Diatonic. To get a specific sequence, the algorithm could perform a random depth first search walk: the bolded arrows show one such configuration. This could form the melodic framework for the verse. We can repeat this process on either this graph or a different one, and call that the chorus, for example. For each of these song sections we could construct basslines, rhythm, and lead sections, using the proposed bag of notes.

Adding rhythm and building the song structure

If we had the snare, the bass drum, and the hi-hats to work with, we could randomly arrange the snare and bass drum on the upbeats, downbeats and backbeats, while the hihats could go on the eigths or the sixteenths. We could vary intensity, and also give each of those instruments space.

Once we’ve constructed a beat, we could start adding the layers in, one by one. We could start with the bassline. We could either bias it to the bass drum to give that “bass lock in effect,” or place it on the offbeat for a more “syncopathic” effect, or do a combination of both. We could also remove bass notes from other sections of the bar to further emphasize the rhythmic panache of the piece.

The other layers could be added in using similar ideas.

Tweaking the tone

One of the advantages of using Web Audio is that you have access to the underlying DSP, which basically means the world is your oyster when it comes to shaping the tone. For example, the bass and bass drum could be compressed to get up front and center in the mix, while the lead portions could be given a little bit of reverb to provide a little more three-dimensionality to the mix.

As my band’s producer, the one key I have found to improve the quality of mixes is to have a set of speakers with an absolutely flat response. 0xmusic was mixed at a professional recording studio, using two sets of speakers with a flat EQ response.

Generating a distinct piece each time you hit play

This is where it all comes together: all of the steps above can be rinsed and repeated each time the play button is pressed. Depending on how many “random” choices the algorithm is making, you could end up with a practically infinite number of pieces. If there were 40 coin flips for example, we’re looking at 1e+12 pieces.

0xmusic reconstitution guide

0xmusic is built to last an eternity because the traits, the metadata, and all the code for the artwork are on-chain. Though the art is rendered from IPFS, if IPFS ever went down, you could still reconstitute your artwork from methods exposed on the smart contract. This section will serve as a guide to reconstruct 0xmusic in its totality from the Ethereum blockchain. All you will need is access to Etherscan, and an environment to run javascript (we recommend visual studio code). The steps to reconstitute the art are shown below:

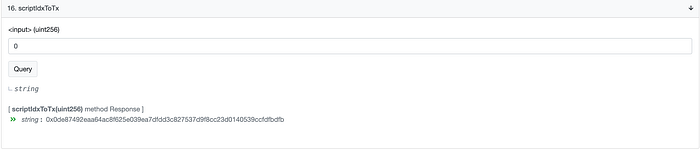

- Navigate to the 0xmusic’s smart contract

- Enter 0 into this view function and hit “query”

3. Navigate to the transaction associated with that id

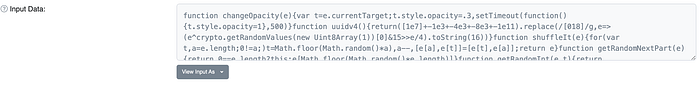

4. Scroll down and click on “Click to see More”. Then go to the Input Data and make sure to view it as UTF-8 by clicking “View Input As” and selecting the option from there.

5. Copy this into a text editor.

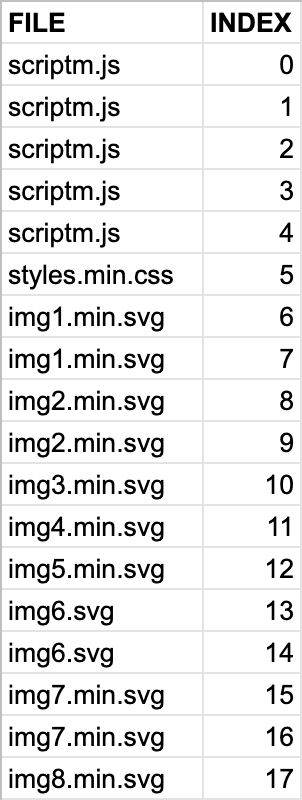

6. Repeat steps 1 through 5 by changing the index number each time, and copy/pasting the outputs as indicated in the table below. Make sure when you paste the code into the corresponding file that you leave no spaces between prior pastings, and that the file names match exactly as mentioned below. So the outputs from the transactions associated with indices 0,1,2,3,4 should all be concatenated into a file called “scriptm.js”. The outputs of index 5 is in file styles.min.css, outputs of indices 6,7 are concatenated into file img1.min.svg, and so on.

Once again, it is important that you name the files as mentioned above and when pasting the transaction output into the same file, leave no space.

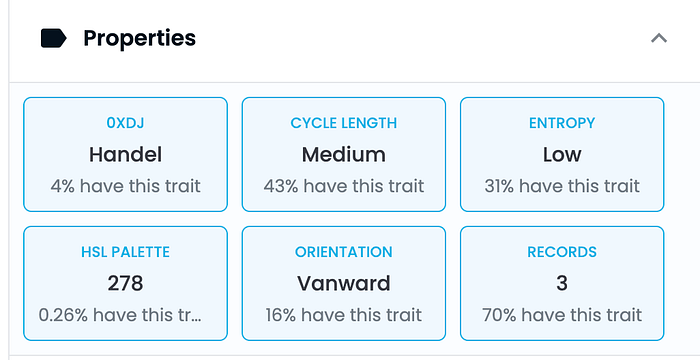

7. From OpenSea or any other marketplace, get the relevant traits for your 0xD — HSL Palette, Records, 0xDJ & Song Length.

8. For the algorithm to run, we need to extract numerical identifiers that the code understands from the traits above.

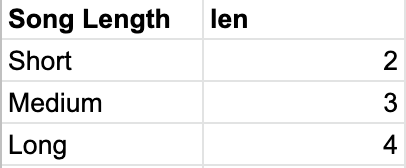

Use the following conversion table to get the genre from the DJ trait you have as well as len from the song length trait that you have. The id parameter is the same as the HSL Palette trait and bg is the same as the records trait.

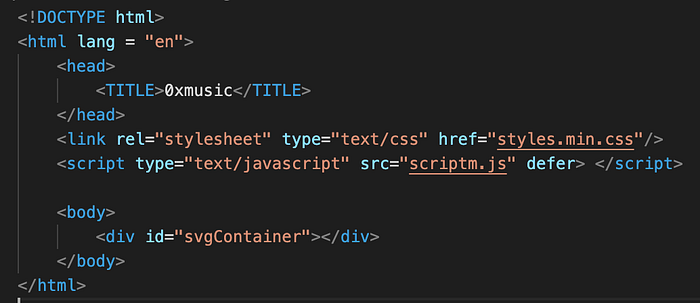

9. Now in a html file in the project invoke the javascript and include the css as shown below. Call it index.html for example.

10. Run the html using Live Server or an equivalent and you should be able to see the art!

index.html?genre={genre}&id={hsl_palette}&bg={records}&len={len}