Create Artistic Effect by Stylizing Image Background — Part 1: Project Intro

Segmentation + Style Transfer

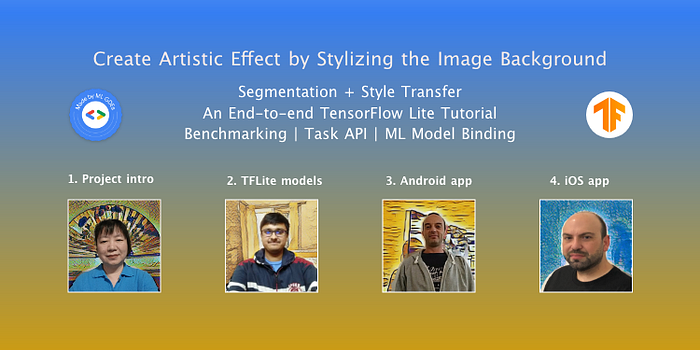

Written by Margaret Maynard-Reid, ML GDE

This is part 1 of an end-to-end TensorFlow Lite tutorial written with a team effort, on how to combine multiple ML models to create artistic effects by segmenting an image and then stylizing the image background with neural style transfer.

The tutorial is divided into four parts and feel free to follow along or skip to the part that is most interesting or relevant for you:

- Part 1: Project Intro (this one), Margaret Maynard-Reid

- Part 2: TensorFlow Lite models, Sayak Paul

- Part 3: Android implementation, George Soloupis

- Part 4: iOS implementation (coming soon…), Patrick Haralabidis

The code can be found here in this GitHub repository where you may find details on TensorFlow Lite models and mobile implementations.

Tutorial Objectives

Here are the main objectives with the tutorial:

- Help you understand how to apply multiple ML models to real world scenarios for creating artistic effects.

- Provide you with a reference on how to save, convert, optimize and benchmark TensorFlow Lite models.

- Guide you on how to create a mobile application with TensorFlow Lite models easily, with Segmentation Task API and the Android Studio ML Model Binding.

- Show you a practical implementation of how to chain multiple ML models on a mobile application.

Segmentation + Style Transfer

Using AI/ML to assist the creation of art and design has become increasingly important for software features, professional artists and hobbyists. Plenty of such examples made by Snapchat, Adobe Photoshop and Nvidia etc.

Background effects

Background removal or blurring can help with preserving privacy or expressing the participant’s emotion. This is an important feature that you may find in conferencing applications such as Google Hangout or Microsoft Teams.

Style transfer

Some other common scenarios include the transformation of the image style, which is super important in defining a piece of artwork or the artist.

While neural style transfer can create artistic effects, it’s not able to mimic the exact style of a particular artist or type of art. Another drawback of neural style transfer is that when applying the transformation to some objects such as human faces or portraits, the output image may not look so appealing although artistic.

Therefore, stylizing only the segmented background results in a much more elegant image for a photo portrait. This also mimics a common scenario in photography or graphics design changing the artworks’ background texture and or style.

Project Overview

When applying ML models to real-world applications, oftentimes we need to chain multiple computer vision models together. In this tutorial, we will show you how to combine multiple ML models to create artistic effects by segmenting an image and then stylize the image background with neural style transfer technique.

In this project we used two ML models — one for segmentation and the other one for style transfer.

1. Segmentation model

The segmentation model is a DeepLabV3-based semantic segmentation model that can associate each pixel in an image to a class label, person, chair, for example. The idea is depicted in the following figure -

2. Style transfer model

For the style-transfer model, we used the models trained as per the approaches proposed in this paper — Exploring the structure of a real-time, arbitrary neural artistic stylization network. This model allows us to control the amount of styling we would like to see in the final stylized image. Here is an example -

Reference: the official TensorFlow Lite documentation

Application design

We chained the two models to produce a stylized image as follows:

- Take a photo or choose an image from the gallery as the input.

- Apply the segmentation model to the input image.

- Apply the style transfer model to the image background (content image)

- Blend the masked out foreground image with the stylized background to produce the final image.

Reference: Colab notebook by Khanh LeViet

The segmentation model applies semantic segmentation which classifies each pixel to a class. Segmentation model could work on multiple objects in an image although for our sample app, we chose to work with selfies or human portraits of faces. So each image will have the portrait and background. We then applied the style transfer to the background only.

Technically, style transfer could be applied to the portrait as well but the image looks more appealing with the background stylized instead. Stylizing the background is also more in line with the real world scenario of applying different backdrops in photography.

UI/UX design

Although this is just a sample app, a carefully designed user experience is needed in order to accommodate multiple ML tasks:

- Input image: first capture an image with camera or select from the gallery.

- Apply segmentation model to remove the image background.

- Apply style transfer to the image background.

- Combine the foreground image with stylized background.

A simplistic design enables all the images and complex logics to fit on the second screen.

Now that you have a good idea of what this project is about, let’s dive into part 2 of the tutorial written by Sayak, on the details of how to create the TensorFlow Lite models.

Acknowledgements

This project is part of the on-going effort to create End-to-End TensorFlow Tutorials with sample code. It’s a collaboration by ML GDEs and members from the community.

Check out the End-to-End TensorFlow Lite Tutorials repo for a list of ideas, work in progress and help needed.

Check out the awesome-tflite repo for an awesome list of TensorFlow Lite models, samples, tutorials, tools and learning resources.

We would like to thank the TensorFlow Lite team Khanh LeViet and Lu Wang for their continuous support.